On October 14, 2007, Edge participated in a morning of "table-top experiments" as part of the Serpentine Gallery Experiment Marathon in London. This live event was featured along with the Edge/Serpentine collaboration: "What Is Your Formula? Your Equation? Your Algorithm? Formulae For the 21st Century."

Seirian Sumner

A cooperative foraging experiment - lessons from ants

Timothy Taylor

The Tradescant's Ark Experiment

Simon Baron-Cohen

Do women have better empathy than men?

Lewis Wolpert

How our limbs are patterned like the French flag

Steven Pinker in conversation with Marcy Kahan

The Stuff of Thought

"Life consists of propositions about life."

— Wallace Stevens ("Men Made Out Of Words")

"I just read the Life transcript book and it is fantastic. One of the better books I've read in a while. Super rich, high signal to noise, great subject."

— Kevin Kelly, Editor-At-Large, Wired

"The more I think about it the more I'm convinced that Life: What A Concept! was one of those memorable events that people in years to come will see as a crucial moment in history. After all, it's where the dawning of the age of biology was officially announced."

— Andrian Kreye, Süddeutsche Zeitung

EDGE PUBLISHES "LIFE: WHAT A CONCEPT!" TRANSCRIPT AS DOWNLOADABLE PDF BOOK [1.14.08]

Edge is pleased to announce the online publication of the complete transcript of this summer's Edge event, Life: What a Concept! as a 43,000- word downloadable PDF Edgebook.

The event took place at Eastover Farm in Bethlehem, CT on Monday, August 27th (see below). Invited to address the topic "Life: What a Concept!" were Freeman Dyson, J. Craig Venter, George Church, Robert Shapiro, Dimitar Sasselov, and Seth Lloyd, who focused on their new, and in more than a few cases, startling research, and/or ideas in the biological sciences.

Reporting on the August event, Andrian Kreye, Feuilleton (Arts & Ideas) Editor of Süddeutsche Zeitung wrote:

Soon genetic engineering will shape our daily life to the same extent that computers do today. This sounds like science fiction, but it is already reality in science. Thus genetic engineer George Church talks about the biological building blocks that he is able to synthetically manufacture. It is only a matter of time until we will be able to manufacture organisms that can self-reproduce, he claims. Most notably J. Craig Venter succeeded in introducing a copy of a DNA-based chromosome into a cell, which from then on was controlled by that strand of DNA.

Jordan Mejias, Arts Correspondent of Frankfurter Allgemeine Zeitung, noted that:

These are thoughts to make jaws drop...Nobody at Eastover Farm seemed afraid of a eugenic revival. What in German circles would have released violent controversies, here drifts by unopposed under mighty maple trees that gently whisper in the breeze.

The following Edge feature on the "Life: What a Concept!" August event includes a photo album; streaming video; and html files of each of the individual talks.

In April, Dennis Overbye, writing in the New York Times "Science Times," broke the story of the discovery by Dimitar Sasselov and his colleagues of five earth-like exo-planets, one of which "might be the first habitable planet outside the solar system."

At the end of June, Craig Venter has announced the results of his lab's work on genome transplantation methods that allows for the transformation of one type of bacteria into another, dictated by the transplanted chromosome. In other words, one species becomes another. In talking to Edge about the research, Venter noted the following:

Now we know we can boot up a chromosome system. It doesn't matter if the DNA is chemically made in a cell or made in a test tube. Until this development, if you made a synthetic chomosome you had the question of what do you do with it. Replacing the chomosome with existing cells, if it works, seems the most effective to way to replace one already in an existing cell systems. We didn't know if it would work or not. Now we do. This is a major advance in the field of synthetic genomics. We now know we can create a synthetic organism. It's not a question of 'if', or 'how', but 'when', and in this regard, think weeks and months, not years.

In July, in an interesting and provocative essay in New York Review of Books entitled "Our Biotech Future," Freeman Dyson wrote:

The Darwinian interlude has lasted for two or three billion years. It probably slowed down the pace of evolution considerably. The basic biochemical machinery o life had evolved rapidly during the few hundreds of millions of years of the pre-Darwinian era, and changed very little in the next two billion years of microbial evolution. Darwinian evolution is slow because individual species, once established evolve very little. With rare exceptions, Darwinian evolution requires established species to become extinct so that new species can replace them.

Now, after three billion years, the Darwinian interlude is over. It was an interlude between two periods of horizontal gene transfer. The epoch of Darwinian evolution based on competition between species ended about ten thousand years ago, when a single species, Homo sapiens, began to dominate and reorganize the biosphere. Since that time, cultural evolution has replaced biological evolution as the main driving force of change. Cultural evolution is not Darwinian. Cultures spread by horizontal transfer of ideas more than by genetic inheritance. Cultural evolution is running a thousand times faster than Darwinian evolution, taking us into a new era of cultural interdependence which we call globalization. And now, as Homo sapiens domesticates the new biotechnology, we are reviving the ancient pre-Darwinian practice of horizontal gene transfer, moving genes easily from microbes to plants and animals, blurring the boundaries between species. We are moving rapidly into the post-Darwinian era, when species other than our own will no longer exist, and the rules of Open Source sharing will be extended from the exchange of software to the exchange of genes. Then the evolution of life will once again be communal, as it was in the good old days before separate species and intellectual property were invented.

It's clear from these developments as well as others, that we are at the end of one empirical road and ready for adventures that will lead us into new realms.

This year's Annual Edge Event took place at Eastover Farm in Bethlehem, CT on Monday, August 27th. Invited to address the topic "Life: What a Concept!" were Freeman Dyson, J. Craig Venter, George Church, Robert Shapiro, Dimitar Sasselov, and Seth Lloyd, who focused on their new, and in more than a few cases, startling research, and/or ideas in the biological sciences.

Physicist Freeman Dyson envisions a biotech future which supplants physics and notes that after three billion years, the Darwinian interlude is over. He refers to an interlude between two periods of horizontal gene transfer, a subject explored in his abovementioned essay.

Craig Venter, who decoded the human genome, surprised the world in late June by announcing the results of his lab's work on genome transplantation methods that allows for the transformation of one type of bacteria into another, dictated by the transplanted chromosome. In other words, one species becomes another.

George Church, the pioneer of the Synthetic Biology revolution, thinks of the cell as operating system, and engineers taking the place of traditional biologists in retooling stripped down components of cells (bio-bricks) in much the vein as in the late 70s when electrical engineers were working their way to the first personal computer by assembling circuit boards, hard drives, monitors, etc.

Biologist Robert Shapiro disagrees with scientists who believe that an extreme stroke of luck was needed to get life started in a non-living environment. He favors the idea that life arose through the normal operation of the laws of physics and chemistry. If he is right, then life may be widespread in the cosmos.

Dimitar Sasselov, Planetary Astrophysicist, and Director of the Harvard Origins of Life Initiative, has made recent discoveries of exo-planets ("Super-Earths"). He looks at new evidence to explore the question of how chemical systems become living systems.

Quantum engineer Seth Lloyd sees the universe as an information processing system in which simple systems such as atoms and molecules must necessarily give rise complex structures such as life, and life itself must give rise to even greater complexity, such as human beings, societies, and whatever comes next.

A small group of journalists interested in the kind of issues that are explored on Edge were present: Corey Powell, Discover, Jordan Mejias, Frankfurter Allgemeine Zeitung, Heidi Ledford, Nature, Greg Huang, New Scientist, Deborah Treisman, New Yorker, Edward Rothstein, The New York Times, Andrian Kreye, Süddeutsche Zeitung, Antonio Regalado, Wall Street Journal. Guests included Heather Kowalski, The J. Craig Venter Institute, Ting Wu, The Wu Lab, Harvard Medical School, and the artist Stephanie Rudloe. Attending for Edge: Katinka Matson, Russell Weinberger, Max Brockman, and Karla Taylor.

We are witnessing a point in which the empirical has intersected with the epistemological: everything becomes new, everything is up for grabs. Big questions are being asked, questions that affect the lives of everyone on the planet. And don't even try to talk about religion: the gods are gone.

Following the theme of new technologies=new perceptions, I asked the speakers to take a third culture slant in the proceedings and explore not only the science but the potential for changes in the intellectual landscape as well.

We are pleased to present the transcripts of the talks and conversation along with streaming video clips (links below).

— JB

FREEMAN DYSON

The essential idea is that you separate metabolism from replication. We know modern life has both metabolism and replication, but they're carried out by separate groups of molecules. Metabolism is carried out by proteins and all kinds of other molecules, and replication is carried out by DNA and RNA. That maybe is a clue to the fact that they started out separate rather than together. So my version of the origin of life is that it started with metabolism only.

FREEMAN DYSON: First of all I wanted to talk a bit about origin of life. To me the most interesting question in biology has always been how it all got started. That has been a hobby of mine. We're all equally ignorant, as far as I can see. That's why somebody like me can pretend to be an expert.

I was struck by the picture of early life that appeared in Carl Woese's article three years ago. He had this picture of the pre-Darwinian epoch when genetic information was open source and everything was shared between different organisms. That picture fits very nicely with my speculative version of origin of life.

The essential idea is that you separate metabolism from replication. We know modern life has both metabolism and replication, but they're carried out by separate groups of molecules. Metabolism is carried out by proteins and all kinds of small molecules, and replication is carried out by DNA and RNA. That maybe is a clue to the fact that they started out separate rather than together. So my version of the origin of life is it started with metabolism only. ...

___

FREEMAN DYSON is professor of physics at the Institute for Advanced Study, in Princeton. His professional interests are in mathematics and astronomy. Among his many books are Disturbing the Universe, Infinite in All Directions Origins of Life, From Eros to Gaia, Imagined Worlds, The Sun, the Genome, and the Internet, and most recently A Many Colored Glass: Reflections on the Place of Life in the Universe.

CRAIG VENTER

I have come to think of life in much more a gene-centric view than even a genome-centric view, although it kind of oscillates. And when we talk about the transplant work, genome-centric becomes more important than gene-centric. From the first third of the Sorcerer II expedition we discovered roughly 6 million new genes that has doubled the number in the public databases when we put them in a few months ago, and in 2008 we are likely to double that entire number again. We're just at the tip of the iceberg of what the divergence is on this planet. We are in a linear phase of gene discovery maybe in a linear phase of unique biological entities if you call those species, discovery, and I think eventually we can have databases that represent the gene repertoire of our planet.

One question is, can we extrapolate back from this data set to describe the most recent common ancestor. I don't necessarily buy that there is a single ancestor. It’s counterintuitive to me. I think we may have thousands of recent common ancestors and they are not necessarily so common.

J. CRAIG VENTER: Seth's statement about digitization is basically what I've spent the last fifteen years of my career doing, digitizing biology. That's what DNA sequencing has been about. I view biology as an analog world that DNA sequencing has taking into the digital world . I'll talk about some of the observations that we have made for a few minutes, and then I will talk about once we can read the genetic code, we've now started the phase where we can write it. And how that is going to be the end of Darwinism.

On the reading side, some of you have heard of our Sorcerer II expedition for the last few years where we've been just shotgun sequencing the ocean. We've just applied the same tools we developed for sequencing the human genome to the environment, and we could apply it to any environment; we could dig up some soil here, or take water from the pond, and discover biology at a scale that people really have not even imagined.

The world of microbiology as we've come to know it is based on over a hundred year old technology of seeing what will grow in culture. Only about a tenth of a percent of microbiological organisms, will grow in the lab using traditional techniques. We decided to go straight to the DNA world to shotgun sequence what's there; using very simple techniques of filtering seawater into different size fractions, and sequencing everything at once that's in the fractions. ...

___

J. CRAIG VENTER is one of leading scientists of the 21st century for his visionary contributions in genomic research. He is founder and president of the J. Craig Venter Institute. The Venter Institute conducts basic research that advances the science of genomics; specializes inhuman genome based medicine, infectious disease, environmental genomics and synthetic genomics and synthetic life, and explores the ethical and policy implications of genomic discoveries and advances. The Venter Institute employes more than 400 scientist and staff in Rockville, Md and in La Jolla, Ca. He is the author of A Life Decoded: My Genome: My Life.

GEORGE CHURCH

Many of the people here worry about what life is, but maybe in a slightly more general way, not just ribosomes, but inorganic life. Would we know it if we saw it? It's important as we go and discover other worlds, as we start creating more complicated robots, and so forth, to know, where do we draw the line?

GEORGE CHURCH: We've heard a little bit about the ancient past of biology, and possible futures, and I'd like to frame what I'm talking about in terms of four subjects that elaborate on that. In terms of past and future, what have we learned from the past, how does that help us design the future, what would we like it to do in the future, how do we know what we should be doing? This sounds like a moral or ethical issue, but it's actually a very practical one too.

One of the things we've learned from the past is that diversity and dispersion are good. How do we inject that into a technological context? That brings the second topic, which is, if we're going to do something, if we have some idea what direction we want to go in, what sort of useful constructions we would like to make, say with biology, what would those useful constructs be? By useful we might mean that the benefits outweigh the costs — and the risks. Not simply costs, you have to have risks, and humans as a species have trouble estimating the long tails of some of the risks, which have big consequences and unintended consequences. So that's utility. 1) What we learn from the future and the past 2) the utility 3) kind of a generalization of life.

Many of the people here worry about what life is, but maybe in a slightly more general way, not just ribosomes, but inorganic life. Would we know it if we saw it? It's important as we go and discover other worlds, as we start creating more complicated robots, and so forth, to know, where do we draw the line? I think that's interesting. And then finally — that's kind of generalizational life, at a basic level — but 4) the kind of life that we are particularly enamored of — partly because of egocentricity, but also for very philosophical reasons — is intelligent life. But how do we talk about that? ...

___

GEORGE CHURCH is Professor of Genetics at Harvard Medical School and Director of the Center for Computational Genetics. He invented the broadly applied concepts of molecular multiplexing and tags, homologous recombination methods, and array DNA synthesizers. Technology transfer of automated sequencing & software to Genome Therapeutics Corp. resulted in the first commercial genome sequence (the human pathogen, H. pylori,1994). He has served in advisory roles for 12 journals, 5 granting agencies and 22 biotech companies. Current research focuses on integrating biosystems-modeling with personal genomics & synthetic biology.

ROBERT SHAPIRO

I looked at the papers published on the origin of life and decided that it was absurd that the thought of nature of its own volition putting together a DNA or an RNA molecule was unbelievable.

I'm always running out of metaphors to try and explain what the difficulty is. But suppose you took Scrabble sets, or any word game sets, blocks with letters, containing every language on Earth, and you heap them together and you then took a scoop and you scooped into that heap, and you flung it out on the lawn there, and the letters fell into a line which contained the words “To be or not to be, that is the question,” that is roughly the odds of an RNA molecule, given no feedback — and there would be no feedback, because it wouldn't be functional until it attained a certain length and could copy itself — appearing on the Earth.

ROBERT SHAPIRO: I was originally an organic chemist — perhaps the only one of the six of us — and worked in the field of organic synthesis, and then I got my PhD, which was in 1959, believe it or not. I had realized that there was a lot of action in Cambridge, England, which was basically organic chemistry, and I went to work with a gentleman named Alexander Todd, promoted eventually to Lord Todd, and I published one paper with him, which was the closest I ever got to the Lord. I then spent decades running a laboratory in DNA chemistry, and so many people were working on DNA synthesis — which has been put to good use as you can see — that I decided to do the opposite, and studied the chemistry of how DNA could be kicked to Hell by environmental agents. Among the most lethal environmental agents I discovered for DNA — pardon me, I'm about to imbibe it — was water. Because water does nasty things to DNA. For example, there's a process I heard you mention called DNA animation, where it kicks off part of the coding part of DNA from the units — that was discovered in my laboratory.

Another thing water does is help the information units fall off of DNA, which is called depurination and ought to apply only one of the subunits — but works under physiological conditions for the pyrimidines as well, and I helped elaborate the mechanism by which water helped destroy that part of DNA structure. I realized what a fragile and vulnerable molecule it was, even if was the center of Earth life. After water, or competing with water, the other thing that really does damage to DNA, that is very much the center of hot research now — again I can't tell you to stop using it — is oxygen. If you don't drink the water and don't breathe the air, as Tom Lehrer used to say, and you should be perfectly safe. ...

___

ROBERT SHAPIRO is professor emeritus of chemistry and senior research scientist at New York University. He has written four books for the general public: Life Beyond Earth (with Gerald Feinberg); Origins, a Skeptic's Guide to the Creation of Life on Earth; The Human Blueprint (on the effort to read the human genome); and Planetary Dreams (on the search for life in our Solar System).

DIMITAR SASSELOV

Is Earth the ideal planet for life? What is the future of life in our universe? We often imagine our place in the universe in the same way we experience our lives and the places we inhabit. We imagine a practically static eternal universe where we, and life in general, are born, grow up, and mature; we are merely one of numerous generations.

This is so untrue! We now know that the universe is 14 and Earth life is 4 billion years old: life and the universe are almost peers. If the universe were a 55-year old, life would be a 16-year old teenager. The universe is nowhere close to being static and unchanging either.

Together with this realization of our changing universe, we are now facing a second, seemingly unrelated realization: there is a new kind of planet out there which have been named super-Earths, that can provide to life all that our little Earth does. And more.

DIMITAR SASSELOV: I will start the same way, by introducing my background. I am a physicist, just like Freeman and Seth, in background, but my expertise is astrophysics, and more particularly planetary astrophysics. So that means I'm here to try to tell you a little bit of what's new in the big picture, and also to warn you that my background basically means that I'm looking for general relationships — for generalities rather than specific answers to the questions that we are discussing here today.

So, for example, I am personally more interested in the question of the origins of life, rather than the origin of life. What I mean by that is I'm trying to understand what we could learn about pathways to life, or pathways to the complex chemistry that we recognize as life. As opposed to narrowly answering the question of what is the origin of life on this planet. And that's not to say there is more value in one or the other; it's just the approach that somebody with my background would naturally try to take. And also the approach, which — I would agree to some extent with what was said already — is in need of more research and has some promise.

One of the reasons why I think there are a lot of interesting new things coming from that perspective, that is from the cosmic perspective, or planetary perspective, is because we have a lot more evidence for what is out there in the universe than we did even a few years ago. So to some extent, what I want to tell you here is some of this new evidence and why is it so exciting, in being able to actually inform what we are discussing here. ...

___

DIMITAR SASSELOV is Professor of Astronomy at Harvard University and Director, Harvard Origins of Life Initiative. Most recently his research has led him to explore the nature of planets orbiting other stars. Using novel techniques, he has discovered a few such planets, and his hope is to use these techniques to find planets like Earth. He is the founder and director of the new Harvard Origins of Life Initiative, a multidisciplinary center bridging scientists in the physical and in the life sciences, intent to study the transition from chemistry to life and its place in the context of the Universe.

Dimitar Sasselov's Edge Bio Page

SETH LLOYD

If you program a computer at random, it will start producing other computers, other ways of computing, other more complicated, composite ways of computing. And here is where life shows up. Because the universe is already computing from the very beginning when it starts, starting from the Big Bang, as soon as elementary particles show up. Then it starts exploring — I'm sorry to have to use anthropomorphic language about this, I'm not imputing any kind of actual intent to the universe as a whole, but I have to use it for this to describe it — it starts to explore other ways of computing.

SETH LLOYD: I'd like to step back from talking about life itself. Instead I'd like to talk about what information processing in the universe can tell us about things like life. There's something rather mysterious about the universe. Not just rather mysterious, extremely mysterious. At bottom, the laws of physics are very simple. You can write them down on the back of a T-shirt: I see them written on the backs of T-shirts at MIT all the time, even in size petite. IN addition to that, the initial state of the universe, from what we can tell from observation, was also extremely simple. It can be described by a very few bits of information.

So we have simple laws and simple initial conditions. Yet if you look around you right now you see a huge amount of complexity. I see a bunch of human beings, each of whom is at least as complex as I am. I see trees and plants, I see cars, and as a mechanical engineer, I have to pay attention to cars. The world is extremely complex.

If you look up at the heavens, the heavens are no longer very uniform. There are clusters of galaxies and galaxies and stars and all sorts of different kinds of planets and super-earths and sub-earths, and super-humans and sub-humans, no doubt. The question is, what in the heck happened? Who ordered that? Where did this come from? Why is the universe complex? Because normally you would think, okay, I start off with very simple initial conditions and very simple laws, and then I should get something that's simple. In fact, mathematical definitions of complexity like algorithmic information say, simple laws, simple initial conditions, imply the state is always simple. It's kind of bizarre. So what is it about the universe that makes it complex, that makes it spontaneously generate complexity? I'm not going to talk about super-natural explanations. What are natural explanations — scientific explanations of our universe and why it generates complexity, including complex things like life? ...

___

SETH LLOYD is Professor of Mechanical Engineering at MIT and Director of the W.M. Keck Center for Extreme Quantum Information Theory (xQIT). He works on problems having to do with information and complex systems from the very small—how do atoms process information, how can you make them compute, to the very large — how does society process information? And how can we understand society in terms of its ability to process information? He is the author if Programming the Universe: A Quantum Computer Scientist Takes On the Cosmos.

FRANKFURTER

August 31,.2007

FEUILLETON — Front Page

Let's play God!; Life's questions: J. Craig Venter programs the future(Lasst uns Gott spielen!)By Jordan Mejias

Was Evolution only an interlude? At the invitation of John Brockman, science luminaries such as J. Craig Venter, Freeman Dyson, Seth Lloyd, Robert Shapiro and others discussed the question: What is Life?

EASTOVER FARM, August 30th

It sounds like seaman's yarn that the scientist with the look of an experienced seafarer has in store for us. The suntanned adventurer with the close-clipped grey beard vaunts the ocean as a sea of bacteria and viruses, unimaginable in their varieties. And in their lifestyle, as we might call it. But what do organisms live off? Like man, not off air or love alone. There can be no life without nutrients, it is said. Not true, says the sea dog. Sometimes a source of energy is enough, for instance, when energy is abundantly provided by sunlight. Could that teach us anything about our very special form of life?

J. Craig Venter, the ingenious decoder of the genome, who takes time off to sail around the world on expeditions, balances his flip-flops on his naked feet as he tells us about such astounding phenomena of life. Us, that means a few hand-picked journalists and half a dozen stars of science, invited by John Brockman, the Guru of the all encompassing "Third Culture", to his farm in Connecticut.

Relaxed, always open for a witty remark, but nevertheless with the indispensable seriousness, the scientific luminaries go to work under Brockman's direction. He, the master of the easy, direct question that unfailingly draws out the most complicated answers, the hottest speculations and debates, has for today transferred his virtual salon, always accessible on the Internet under the name Edge, to a very real and idyllic summer's day. This time the subject matter is nothing other than life itself.

When Venter speaks of life, it's almost as if he were reading from the script of a highly elaborate Science Fiction film. We are told to imagine organisms that not only can survive dangerous radiations, but that remain hale and hearty as they journey through the Universe. Still, he of all people, the revolutionary geneticist, warns against setting off in an overly gene-centric direction when trying to track down Life. For the way in which a gene makes itself known, will depend to a large degree upon the aid of overlooked transporter genes. In spite of this he considers the genetic code a better instrument to organize living organisms than the conventional system of classification by species.

Many colleagues nod in agreement, when they are not smiling in agreement. But this cannot be all that Venter has up his sleeve. Just a short while ago, he created a stir with the announcement that his Institute had succeeded in transplanting the genome of one bacterium into another. With this, he had newly programmed an organism. Should he be allowed to do this? A question not only for scientists. Eastover Farm was lacking in ethicists, philosophers and theologians, but Venter had taken precautions. He took a year to learn from the world's large religions whether it was permissible to synthesize life in the lab. Not a single religious representative could find grounds to object. All essentially agreed: It's okay to play God.

Maybe some of the participants would have liked to hear more on the subject, but the day in Nature's lap was for identifying themes, not giving and receiving exhaustive amounts of information. A whiff of the most breathtaking visions, both good and bad, was enough. There were already frightening hues in the ultimate identity theft, to which Venter admitted with his genome exchange. What if a cell were captured by foreign DNA? Wouldn't it be a nightmare in the shape of a genuine Darwinian victory of the strong over the weak? Venter was applying dark colors here, whereas Freeman Dyson had painted us a much more mellow picture of the future.

Dyson, the great, not yet quite eighty-four year old youngster, physicist and futurist, regards evolution as an interlude. According to his calculations, the competition between species has gone on for just three billion years. Before that, according to Dyson, living organisms participated in horizontal gene transfers; if you will, they preferred the peaceful exchange of information among themselves. In the ten thousand years since Homo sapiens conquered the biosphere, Dyson once again sees a return of the old Modus Operandi, although in a modified form.

The scenario goes as follows: Cultural evolution, characterized by the transfer of ideas, has replaced the much slower biological evolution. Today, ideas, not genes, tip the scales. In availing himself of biotechnology, Man has picked up the torn pre-evolutionary thread and revived the genetic back and forth between microbes, plants and animals. Bit by bit the borders between species are disappearing. Soon only one species will remain, namely the genetically modified human, while the rules of Open Source, which guarantee the unhindered exchange of software in computers, will also apply to the exchange of genes. The evolution of life, in nutshell, will return soon to a state of agreeable unity, as it existed in good old pre-Darwinian times, when life had not yet been separated into distinct species.

Though Venter may not trust in this future peace, he nearly matches Dyson in his futuristic enthusiasm. But he is enough of a realist to stress that he has never talked of creating new life from scratch. He is confident that he can develop new species and life forms, but will always have to rely on existing materials that he finds. Even he cannot conjure a cell out of nothing. So far, so good and so humble.

The rest is sheer bravado. He considers manipulation of human genes not only possible, but desirable. There's no question that he will continue to disappoint the inmate who once asked him to fashion an attractive cellmate, just as he refused the wish of an unsavory gentleman who yearned for mentally underdeveloped working-class people. But, Venter asks, who can object to humans having genetically beefed-up Intelligence? Or to new genomes that open the door to new, undreamt-of sources of bio fuel? Nobody at Eastover Farm seemed afraid of a eugenic revival. What in German circles would have released violent controversies, here drifts by unopposed under mighty maple trees that gently whisper in the breeze.

All the same, Venter does confess that such life transforming technology, more powerful than any, humanity could harness until now, inevitably plunges him in doubt, particularly when looking back on human history. Still, he looks toward the future with hope and confidence. As does George Church, the molecular geneticist from Harvard, who wouldn't be surprised if a future computer would be able outperform the human brain. Could resourcefully mixed DNA be helpful to us? The organic chemist Robert Shapiro, Emeritus of New York University, objects strongly to viewing DNA as a monopolistic force. Will he assure us, that life consists of more than DNA? But of what? Is it conceivable that there are certain forms of life we still are unable to recognize? Who wants to confirm that nothing runs without DNA? Why should life not also arise from minerals???These are thoughts to make jaws drop, not only among laymen. Venter also is concerned that Shapiro defines life all too loosely. But both, the geneticist and the chemist focus on the moment at which life is breathed into an inanimate object. This will be, in Venter's opinion, the next milestone in the investigation and conditioning of life. We can no longer beat around the bush: What is Life? Venter declines to answer, he doesn't want to be drawn into philosophical bullshit, as he says. Is a virus a life form? Must life, in order to be recognized as life, be self-reproducing? A colorful butterfly glides through the debate. Life can appear so weightless. And it is so difficult to describe and define.

Seth Lloyd, the quantum mechanic from MIT points out mischievously that we know far more about the origin of the universe than we do about the origin of life. Using the quantum computer as his departing point, he tries to give us an idea of the huge number of possibilities out of which life could have developed. If Albert Einstein did not wish to envisage a dice-playing god, Lloyd, the entertaining thinker, can't help to see only dice-playing, though presumably without the assistance of god. Everything reveals itself in his life panorama as a result of chance, whether here on Earth or in an incomprehensible distance

Astrophysicist Dimitar Sasselov works also under the auspices of chance. Although his field of research necessarily widens our perspective, he can present us only a few places in the universe that could be suitable for life. Only five Super-Earths, as Sasselov calls those planets that are larger than Earth, are known to us at this point. With improved recognition technologies, perhaps a hundred million could be found in the universe in all. No, that is still, distributed throughout and applied to the entire universe, not a grand number. But the number is large enough to give us hope for real co-inhabitants of our universe. Somewhere, sometime, we could encounter microbial life.

Most likely this would be life in a form that we cannot even fathom yet. It will all depend on what we, strange life forms that we are, can acknowledge as life. At Eastover Farm our imaginative powers were already being vigorously tested.

Text: F.A.Z., 31.08.2007, No. 202 / page 33

Translated by Karla taylor

SUEDDEUTSCHE ZEITUNG

September 3, 2007

FEUILLETON — Front Page

DARWIN WAS JUST A PHASE?(Darwin war nur eine Phase)Country Life in Connecticut: Six scientists find the future in genetic engineering??By Andrian Kreye

The origins of life were the subject of discussion on a summer day when six pioneers of science convened at Eastover Farm in Connecticut. The physicist and scientific theorist Freeman Dyson was the first of the speakers to talk on the theme: "Life: What a Concept!" An ironic slogan for one of the most complex problems. Seth Lloyd, quantum physicist at MIT, summed it up with his remark that scientists now know everything about the origin of the Universe and virtually nothing about the origin of life. Which makes it rather difficult to deal with the new world view currently taking shape in the wake of the emerging age of biology.

The roster of thinkers had assembled at the invitation of literary agent John Brockman, who specializes in scientific ideas. The setting was distinguished. Eastover Farm sits in the part of Connecticut where the rich and famous New Yorkers who find the beach resorts of the Hamptons too loud and pretentious have settled. Here the scientific luminaries sat at long tables in the shade of the rustling leaves of maple trees, breaking just for lunch at the farmhouse.

The day remained on topic, as Brockman had invited only half a dozen journalists, to avoid slowing the thinkers down with an onslaught of too many layman's questions. The object was to have them talk about ideas mainly amongst themselves in the manner of a salon, not unlike his online forum edge.org. Not that the day went over the heads of the non-scientist guests. With Dyson, Lloyd, genetic engineer George Church, chemist Robert Shapiro, astronomer Dimitar Sasselov and biologist and decoder of the genome J. Craig Venter, six men came together, each of whom have made enormous contributions in interdiscplinary sciences, and as a consequence have mastered the ability to talk to people who are not well-read in their respective fields. This made it possible for an outsider to follow the discussions, even if at moments, he was made to feel just that, as when Robert Shapiro cracked a joke about RNA that was met with great laughter from the scientists.

Freeman Dyson, a fragile gentleman of 84 years, opened the morning with his legendary provocation that Darwinian evolution represents only a short phase of three billion years in the life of this planet, a phase that will soon reach its end. According to this view, life began in primeval times with a haphazard assemblage of cells, RNA-driven organisms ensued, which, in the third phase of terrestrial life would have learned to function together. Reproduction appeared on the scene in the fourth phase, multicellular beings and the principle of death appeared in the fifth phase.

The End of Natural Selection

We humans belong to the sixth phase of evolution, which progresses very slowly by way of Darwinian natural selection. But this according to Dyson will soon come to an end, because men like George Church and J. Craig Venter are expected to succeed not only in reading the genome, but also in writing new genomes in the next five to ten years. This would constitute the ultimate "Intelligent Design", pun fully intended. Where this could lead is still difficult to anticipate. Yet Freeman Dyson finds a meaningful illustration. He spent the early nineteen fifties at Princeton, with mathematician John von Neuman, who designed one of the earliest programmable computers. When asked how many computers might be in demand, von Neumann assured him that 18 would be sufficient to meet the demand of a nation like the United States. Now, 55 years later, we are in the middle of the age of physics where computers play an integral role in modern life and culture.

Now though we are entering the age of biology. Soon genetic engineering will shape our daily life to the same extent that computers do today. This sounds like science fiction, but it is already reality in science. Thus genetic engineer George Church talks about the biological building blocks that he is able to synthetically manufacture. It is only a matter of time until we will be able to manufacture organisms that can self-reproduce, he claims. Most notably J. Craig Venter succeeded in introducing a copy of a DNA-based chromosome into a cell, which from then on was controlled by that strand of DNA.

Venter, a suntanned giant with the build of a surfer and the hunting instinct of a captain of industry, understands the magnitude of this feat in microbiology. And he understands the potential of his research to create biofuel from bacteria. He wouldn't dare to say it, but he very well might be a Bill Gates of the age of biology. Venter also understands the moral implications. He approached bioethicist Art Kaplan in the nineties and asked him to do a study on whether in designing a new genome he would raise ethical or religious objections. Not a single religious leader or philosopher involved in the study could find a problem there. Such contract studies are debatable. But here at Eastover Farm scientists dream of a glorious future. Because science as such is morally neutral—every scientific breakthrough can be applied for good or for bad.

The sun is already turning pink behind the treetops, when Dimitar Sasselov, the Bulgarian astronomer from Harvard, once more reminds us how unique and at the same time, how unstable the balance of our terrestrial life is. In our galaxy, astronomers have found roughly one hundred million planets that could theoretically harbor organic life. Not only does Earth not have the best conditions among them; it is actually at the very edge of the spectrum. "Earth is not particularly inhabitable," he says, wrapping up his talk. Here J. Craig Venter cannot help but remark as an idealist: "But it is getting better all the time".

Translated by Karla Taylor

Andrian Kreye, Süddeutsche Zeitung

Jordan Mejias, Frankfurter Allgemeine Zeitung

RICHARD DAWKINS—FREEMAN DYSON: AN EXCHANGE

As part of this year's Edge Event at Eastover Farm in Bethlehem, CT, I invited three of the participants—Freeman Dyson, George Church, and Craig Venter—to come up a day early, which gave me an opportunity to talk to Dyson about his abovementioned essay in New York Review of Books entitled "Our Biotech Future".

I also sent the link to the essay to Richard Dawkins, and asked if he would would comment on what Dyson termed the end of "the Darwinian interlude".

Early the next morning, prior to the all-day discussion (which also included as participants Robert Shapiro, Dimitar Sasselov, and Seth Lloyd) Dawkins emailed his thoughts which I read to the group during the discussion following Dyson's talk. [NOTE: Dawkins asked me to make it clear that his email below "was written hastily as a letter to you, and was not designed for publication, or indeed to be read out at a meeting of biologists at your farm!"].

Now Dyson has responded and the exchange is below.

—JB

RICHARD DAWKINS [8.27.07] Evolutionary Biologist, Charles Simonyi Professor For The Understanding Of Science, Oxford University; Author, The God Delusion

"By Darwinian evolution he [Woese] means evolution as Darwin understood it, based on the competition for survival of noninterbreeding species."

"With rare exceptions, Darwinian evolution requires established species to become extinct so that new species can replace them."

These two quotations from Dyson constitute a classic schoolboy howler, a catastrophic misunderstanding of Darwinian evolution. Darwinian evolution, both as Darwin understood it, and as we understand it today in rather different language, is NOT based on the competition for survival of species. It is based on competition for survival WITHIN species. Darwin would have said competition between individuals within every species. I would say competition between genes within gene pools. The difference between those two ways of putting it is small compared with Dyson's howler (shared by most laymen: it is the howler that I wrote The Selfish Gene partly to dispel, and I thought I had pretty much succeeded, but Dyson obviously hasn't read it!) that natural selection is about the differential survival or extinction of species. Of course the extinction of species is extremely important in the history of life, and there may very well be non-random aspects of it (some species are more likely to go extinct than others) but, although this may in some superficial sense resemble Darwinian selection, it is NOT the selection process that has driven evolution. Moreover, arms races between species constitute an important part of the competitive climate that drives Darwinian evolution. But in, for example, the arms race between predators and prey, or parasites and hosts, the competition that drives evolution is all going on within species. Individual foxes don't compete with rabbits, they compete with other individual foxes within their own species to be the ones that catch the rabbits (I would prefer to rephrase it as competition between genes within the fox gene pool).

The rest of Dyson's piece is interesting, as you'd expect, and there really is an interesting sense in which there is an interlude between two periods of horizontal transfer (and we mustn't forget that bacteria still practise horizontal transfer and have done throughout the time when eucaryotes have been in the 'Interlude'). But the interlude in the middle is not the Darwinian Interlude, it is the Meiosis / Sex / Gene-Pool / Species Interlude. Darwinian selection between genes still goes on during eras of horizontal transfer, just as it does during the Interlude. What happened during the 3-billion-year Interlude is that genes were confined to gene pools and limited to competing with other genes within the same species. Previously (and still in bacteria) they were free to compete with other genes more widely (there was no such thing as a species outside the 'Interlude'). If a new period of horizontal transfer is indeed now dawning through technology, genes may become free to compete with other genes more widely yet again.

As I said, there are fascinating ideas in Freeman Dyson's piece. But it is a huge pity it is marred by such an elementary mistake at the heart of it.

FREEMAN DYSON [8.30.07] Physicist, Institute of Advanced Study, Author, Many Colored Glass: Reflections on the Place of Life in the Universe

Dear Richard Dawkins,

Thank you for the E-mail that you sent to John Brockman, saying that I had made a "school-boy howler" when I said that Darwinian evolution was a competition between species rather than between individuals. You also said I obviously had not read The Selfish Gene. In fact I did read your book and disagreed with it for the following reasons.

Here are two replies to your E-mail. The first was a verbal response made immediately when Brockman read your E-mail aloud at a meeting of biologists at his farm. The second was written the following day after thinking more carefully about the question.

First response. What I wrote is not a howler and Dawkins is wrong. Species once established evolve very little, and the big steps in evolution mostly occur at speciation events when new species appear with new adaptations. The reason for this is that the rate of evolution of a population is roughly proportional to the inverse square root of the population size. So big steps are most likely when populations are small, giving rise to the ``punctuated equilibrium'' that is seen in the fossil record. The competition is between the new species with a small population adapting fast to new conditions and the old species with a big population adapting slowly.

In my opinion, both these responses are valid, but the second one goes more directly to the issue that divides us. Yours sincerely, Freeman Dyson.

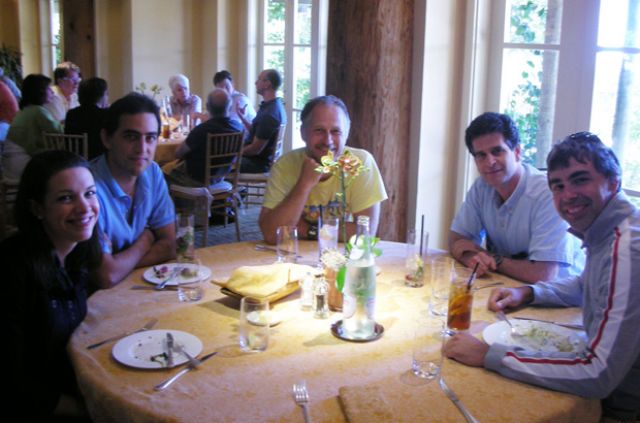

Dimitar Sasselov, George Church, Robert Shapiro, John Brockman,

J. Craig Venter,Seth Lloyd, Freeman Dyson

A SHORT COURSE IN THINKING ABOUT THINKING

Edge Master Class '07

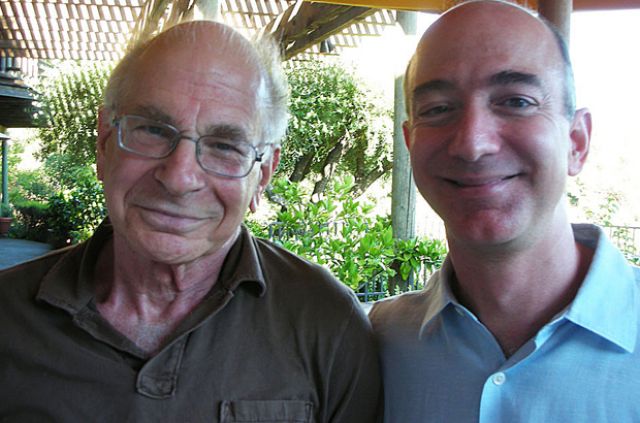

DANIEL KAHNEMAN

Auberge du Soleil, Rutherford, CA, July 20-22, 2007

AN EDGE SPECIAL PROJECT

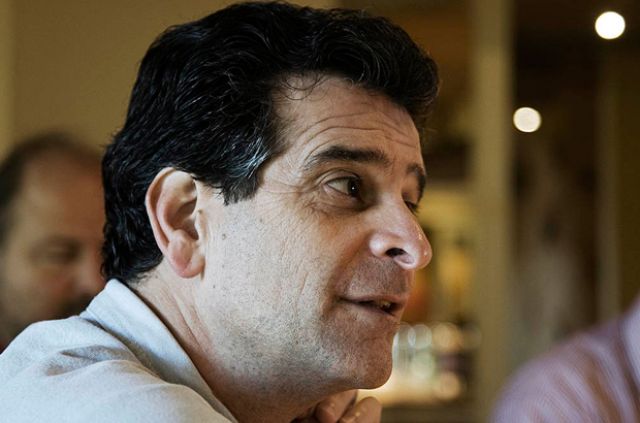

ATTENDEES: Jeff Bezos, Founder, Amazon.com; Stewart Brand, Cofounder, Long Now Foundation, Author, How Buildings Learn; Sergey Brin, Founder, Google; John Brockman, Edge Foundation, Inc.; Max Brockman, Brockman, Inc.; Peter Diamandis, Space Entrepreneur, Founder, X Prize Foundation; George Dyson, Science Historian; Author, Darwin Among the Machines; W. Daniel Hillis, Computer Scientist; Cofounder, Applied Minds; Author, The Pattern on the Stone; Daniel Kahneman, Psychologist; Nobel Laureate, Princeton University; Dean Kamen, Inventor, Deka Research; Salar Kamangar, Google; Seth Lloyd, Quantum Physicist, MIT, Author, Programming The Universe; Katinka Matson, Cofounder, Edge Foundation, Inc.; Nathan Myhrvold, Physicist; Founder, Intellectual Venture, LLC; Event Photographer; Tim O'Reilly, Founder, O'Reilly Media; Larry Page, Founder, Google; George Smoot, Physicist, Nobel Laureate, Berkeley, Coauthor, Wrinkles In Time; Anne Treisman, Psychologist, Princeton University;Jimmy Wales, Founder, Chair, Wikimedia Foundation (Wikipedia).

INTRODUCTION

By John Brockman

Recently, I spent several months working closely with Danny Kahneman, the psychologist who is the co-creator of behavioral economics (with his late collaborator Amos Tversky), for which he won the Nobel Prize in Economics in 2002.

My discussions with him inspired a 2-day "Master Class" given by Kahneman for a group of twenty leading American business/Internet/culture innovators—a microcosm of the recently dominant sector of American business—in Napa, California in July. They came to hear him lecture on his ideas and research in diverse fields such as human judgment, decision making and behavioral economics and well-being.

Dean Kamen |

Jeff Bezos |

Larry Page |

While Kahneman has a wide following among people who study risk, decision-making, and other aspects of human judgment, he is not exactly a household name. Yet among many of the top thinkers in psychology, he ranks at the top of the field.

Harvard psychologist Daniel Gilbert (Stumbling on Happiness) writes: "Danny Kahneman is simply the most distinguished living psychologist in the world, bar none. Trying to say something smart about Danny's contributions to science is like trying to say something smart about water: It is everywhere, in everything, and a world without it would be a world unimaginably different than this one." And according to Harvard's Steven Pinker (The Stuff of Thought): "It's not an exaggeration to say that Kahneman is one of the most influential psychologists in history and certainly the most important psychologist alive today. He has made seminal contributions over a wide range of fields including social psychology, cognitive science, reasoning and thinking, and behavioral economics, a field he and his partner Amos Tversky invented."

Jimmy Wales |

Nathan Myhrvold |

Stewart Brand |

Here are some examples from the national media which illustrate how Kahneman's ideas are reflected in the public conversation:

In the Economist "Happiness & Economics " issue in December, 2006, Kahneman is credited with the new hedonimetry regarding his argument that people are not as mysterious as less nosy economists supposed. "The view that hedonic states cannot be measured because they are private events is widely held but incorrect."

Paul Krugman, in his New York Times column, "Quagmire Of The Vanities" (January 8, 2007), asks if the proponents of the "surge" in Iraq are cynical or delusional. He presents Kahneman's view that "the administration's unwillingness to face reality in Iraq reflects a basic human aversion to cutting one's losses—the same instinct that makes gamblers stay at the table, hoping to break even."

His articles have been picked up by the press and written about extensively. The most recent example is Jim Holt's lede piece in The New York Times Magazine, "You are What You Expect" (January 21, 2007), an article about this year's Edge Annual Question "What Are You Optimistic About?". It was prefaced with a commentary regarding Kahneman's ideas on "optimism bias".

In Jerome Groopman's New Yorker article, "What's the trouble? How Doctors Think" (January 29, 2007), Groopman looks at a medical misdiagnosis through the prism of a heuristic called "availability," which refers to the tendency to judge the likelihood of an event by the ease with which relevant examples come to mind. This tendency was first described in 1973, in Kahneman's paper with Amos Tversky when they were psychologists at the Hebrew University of Jerusalem.

Kahneman's article (with Jonathan Renshon) "Why Hawks Win" was published in Foreign Policy (January/February 2007); Kahneman points out that the answer may lie deep in the human mind. People have dozens of decision-making biases, and almost all favor conflict rather than concession. The article takes a look at why the tough guys win more than they should. Publication came during the run up to Davis, and the article became a focus of numerous discussions and related articles.

The event was an unqualified success. As one of the attendees later wrote: "Even with the perspective a few weeks, I can still think it is one of the all time best conferences that I have ever attended."

George Smoot |

Daniel Kahneman |

Sergey Brin |

Over a period of two days, Kahneman presided over six sessions lasting about eight hours. The entire event was videotaped as an archive. Edge is pleased to present a sampling from the event consisting of streaming video of the first 10-15 minutes of each session along with the related verbatim transcripts.

—JB

DANIEL KAHNEMAN is Eugene Higgins Professor of Psychology, Princeton University, and Professor of Public Affairs, Woodrow Wilson School of Public and International Affairs. He is winner of the 2002 Nobel Prize in Economic Sciences for his pioneering work integrating insights from psychological research into economic science, especially concerning human judgment and decision-making under uncertainty.

Daniel Kahneman's Edge Bio Page

Daniel Kahneman's Nobel Prize Lecture

SESSION ONE

I'll start with a topic that is called an inside-outside view of the planning fallacy. And it starts with a personal story, which is a true story....

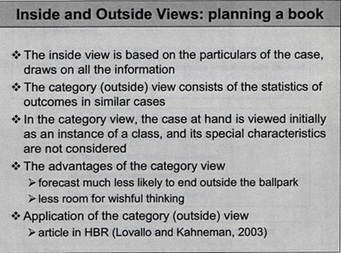

KAHNEMAN: I'll start with a topic that is called an inside-outside view of the planning fallacy. And it starts with a personal story, which is a true story.

Well over 30 years ago I was in Israel, already working on judgment and decision making, and the idea came up to write a curriculum to teach judgment and decision making in high schools without mathematics. I put together a group of people that included some experienced teachers and some assistants, as well as the Dean of the School of Education at the time, who was a curriculum expert. We worked on writing the textbook as a group for about a year, and it was going pretty well—we had written a couple of chapters, we had given a couple of sample lessons. There was a great sense that we were making progress. We used to meet every Friday afternoon, and one day we had been talking about how to elicit information from groups and how to think about the future, and so I said, Let's see how we think about the future.

I asked everybody to write down on a slip of paper his or her estimate of the date on which we would hand the draft of the book over to the Ministry of Education. That by itself by the way was something that we had learned: you don't want to start by discussing something, you want to start by eliciting as many different opinions as possible, which you then you pool. So everybody did that, and we were really quite narrowly centered around two years; the range of estimates that people had—including myself and the Dean of the School of Education—was between 18 months and two and a half years.

But then something else occurred to me, and I asked the Dean of Education of the school whether he could think of other groups similar to our group that had been involved in developing a curriculum where no curriculum had existed before. At that period—I think it was the early 70s—there was a lot of activity in the biology curriculum, and in mathematics, and so he said, yes, he could think of quite a few. I asked him whether he knew specifically about these groups and he said there were quite a few of them about which he knew a lot. So I asked him to imagine them, thinking back to when they were at about the same state of progress we had reached, after which I asked the obvious question—how long did it take them to finish?

It's a story I've told many times, so I don't know whether I remember the story or the event, but I think he blushed, because what he said then was really kind of embarrassing, which was, You know I've never thought of it, but actually not all of them wrote a book. I asked how many, and he said roughly 40 percent of the groups he knew about never finished. By that time, there was a pall of gloom falling over the room, and I asked, of those who finished, how long did it take them? He thought for awhile and said, I cannot think of any group that finished in less than seven years and I can't think of any that went on for more than ten.

I asked one final question before doing something totally irrational, which was, in terms of resources, how good were we are at what we were doing, and where he would place us in the spectrum. His response I do remember, which was, below average, but not by much. [much laughter]

I'm deeply ashamed of the rest of the story, but there was something really instructive happening here, because there are two ways of looking at a problem; the inside view and the outside view. The inside view is looking at your problem and trying to estimate what will happen in your problem. The outside view involves making that an instance of something else—of a class. When you then look at the statistics of the class, it is a very different way of thinking about problems. And what's interesting is that it is a very unnatural way to think about problems, because you have to forget things that you know—and you know everything about what you're trying to do, your plan and so on—and to look at yourself as a point in the distribution is a very un-natural exercise; people actually hate doing this and resist it.

There are also many difficulties in determining the reference class. In this case, the reference class is pretty straightforward; it's other people developing curricula. But what's psychologically interesting about the incident is all of that information was in the head of the Dean of the School of Education, and still he said two years. There was no contact between something he knew and something he said. What psychologically to me was the truly insightful thing, was that he had all the information necessary to conclude that the prediction he was writing down was ridiculous.

COMMENT: Perhaps he was being tactful.

KAHNEMAN: No, he wasn't being tactful; he really didn't know. This is really something that I think happens a lot—the outside view comes up in something that I call ‘narrow framing,' which is, you focus on the problem at hand and don't see the class to which it belongs. That's part of the psychology of it. There is no question as to which is more accurate—clearly the outside view, by and large, is the better way to go.

Let me just add two elements to the story. One, which I'm really ashamed of, is that obviously we should have quit. None of us was willing to spend seven years writing the bloody book. It was out of the question. We didn't stop and I think that really was the end of rational planning. When I look back on the humor of our writing a book on rationality, and going on after we knew that what we were doing was not worth doing, is not something I'm proud of.

COMMENT: So you were one of the 40 percent in the end.

KAHNEMAN: No, actually I wasn't there. I got divorced, I got married, I left the country. The work went on. There was a book. It took eight years to write. It was completely worthless. There were some copies printed, they were never used. That's the end of that story. ...

SESSION TWO

Let me introduce a plan for this session. I'd like to take a detour, but where I would like to end up is with a realistic theory of risk taking. But I need to take a detour to make that sensible. I'd like to start by telling you what I think is the idea that got me the Nobel Prize—should have gotten Amos Tversky and me the Nobel Prize because it was something that we did together—and it's an embarrassingly simple idea. I'm going to tell you the personal story of this, and I call it "Bernoulli's Error"—the major theory of how people take risks...

KAHNEMAN: Let me introduce a plan for this session. I'd like to take a detour, but where I would like to end up is with a realistic theory of risk taking. But I need to take a detour to make that sensible. I'd like to start by telling you what I think is the idea that got me the Nobel Prize—should have gotten Amos Tversky and me the Nobel Prize because it was something that we did together—and it's an embarrassingly simple idea. I'm going to tell you the personal story of this, and I call it "Bernoulli's Error"—the major theory of how people take risks.

The quick history of the field is that in 1738 Daniel Bernoulli wrote a magnificent essay in which he presented many of the seminal ideas of how people take risks, published at the St. Petersburg Academy of Sciences. And he had a theory that explained why people take risks. Up to that time people were evaluating gambles by expected value, but expected value was never explained with conversion, and why people prefer to get sure things rather than gambles of equal expected value. And so he introduced the idea of utility (as a psychological variable), and that's what people assign to outcomes so they're not computing the weighted average of outcomes where the weights are the probabilities, they're computing the weighted average of the utilities of outcomes. Big discovery—big step in the understanding of it. It moves the understanding of risk taking from the outside world, where you're looking at values, to the inside world, where you're looking at the assignment of utilities. That was a great contribution.

He was trying to understand the decisions of merchants, really, and the example that he analyzes in some depth is the example of the merchant who has a ship loaded with spices, which he is going to send from Amsterdam to St. Petersburg – during the winter—with a 5 percent probability that the ship will be lost. That's the problem. He wants to figure out how the merchant is going to do this, when the merchant is going to decide that it's worth it, and how much insurance the merchant should be willing to pay. All of this he solves. And in the process, he goes through a very elaborate derivation of logarithms. He really explains the idea.

Bernoulli starts out from the psychological insight, which is very straightforward, that losing one ducat if you have ten ducats is like losing a hundred ducats if you have a thousand. The psychological response is proportional to your wealth. That very quickly forces a logarithmic utility function. The merchant assigns a psychological value to different states of wealth and says, if the ship makes it this is how wealthy I will be; if the ship sinks this is my wealth; this is my current wealth; these are the odds; you have a logarithmic utility function, and you figure it out. You know if it's positive, you do it; if it's not positive you don't; and the difference tells you how much you'd be willing to pay for insurance.

This is still the basic theory you learn when you study economics, and in business you basically learn variants on Bernoulli's utility theory. It's been modified, it's axiomatic and formalized, and it's no longer logarithmic necessarily, but that's the basic idea.

When Amos Tversky and I decided to work on this, I didn't know a thing about decision-making—it was his field of expertise. He had written a book with his teacher and a colleague called "Mathematical Psychology" and he gave me his copy of the book and told me to read the chapter that explained utility theory. It explained utility theory and the basic paradoxes of utility theory that have been formulated and the problems with the theory. Among the other things in that chapter were some really extraordinary people—Donald Davidson, one of the great philosophers of the twentieth century, Patrick Suppes—who had fallen love with the modern version of expected utility theory and had tried to measure the utility of money by actually running experiments where they asked people to choose between gambles. And that's what the chapter was about.

I read the chapter, but I was puzzled by something, that I didn't understand, and I assumed there was a simple answer. The gambles were formulated in terms of gains and losses, which is the way that you would normally formulate a gamble—actually there were no losses; there was always the choice between a sure thing and a probability of gaining something. But they plotted it as if you could infer the utility of wealth—the function that they drew was the utility of wealth, but the question they were asking was about gains.

I went back to Amos and I said, this is really weird: I don't see how you can get from gambles of gains and losses to the utility of wealth. You are not asking about wealth. As a psychologist you would know that if it demands complicated mathematical transformation, something is going wrong. If you want the utility of wealth you had better ask about wealth. If you're asking about gains, you are getting the utility of gains; you are not getting the utility of wealth. And that actually was the beginning of the theory that's called "Prospect Theory," which is considered a main contribution that we made. And the contribution is what I call "Bernoulli's Error". Bernoulli thought in terms of states of wealth, which maybe makes intuitive sense when you're thinking of the merchant. But that's not how you think when you're making everyday decisions. When those great philosophers went out to do their experiments measuring utility, they did the natural thing—you could gain that much, you could have that much for sure, or have a certain probability of gaining more. And wealth is not anywhere in the picture. Most of the time people think in terms of gains and losses.

There is no question that you can make people think in terms of wealth, but you have to frame it that way, you have to force them to think in terms of wealth. Normally they think in terms of gains and losses. Basically that's the essence of Prospect Theory. It's a theory that's defined on gains and losses. It adds a parameter to Bernoulli's theory so what I call Bernoulli's Error is that he is short one parameter.

I will tell you for example what this means. You have somebody who's facing a choice between having—I won't use large units, I'll use the units I use for my students—2 million, or an equal probability of having one or four. And those are states of wealth. In Bernoulli's account, that's sufficient. It's a well-defined problem. But notice that there is something that you don't know when you're doing this: you don't know how much the person has now.

So Bernoulli in effect assumes, having utilities for wealth, that your current wealth doesn't matter when you're facing that choice. You have a utility for wealth, and what you have doesn't matter. Basically the idea that you're figuring gains and losses means that what you have does matter. And in fact in this case it does.

When you stop to think about it, people are much more risk-averse when they are looking at it from below than when they're looking at it from above. When you ask who is more likely to take the two million for sure, the one who has one million or the one who has four, it is very clear that it's the one with one, and that the one with four might be much more likely to gamble. And that's what we find.

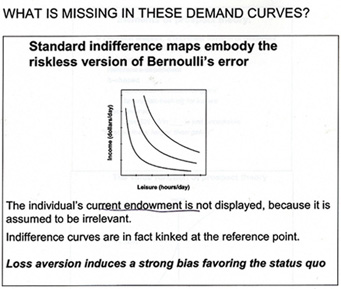

So Bernoulli's theory lacks a parameter. Here you have a standard function between leisure and income and I ask what's missing in this function. And what's missing is absolutely fundamental. What's missing is, where is the person now, on that tradeoff? In fact, when you draw real demand curves, they are kinked; they don't look anything like this. They are kinked where the person is. Where you are turns out to be a fundamentally important parameter.

Lots of very very good people went on with the missing parameter for three hundred years—theory has the blinding effect that you don't even see the problem, because you are so used to thinking in its terms. There is a way it's always done, and it takes somebody who is naïve, as I was, to see that there is something very odd, and it's because I didn't know this theory that I was in fact able to see that.

But demand curves are wrong. You always want to know where the person is. ...

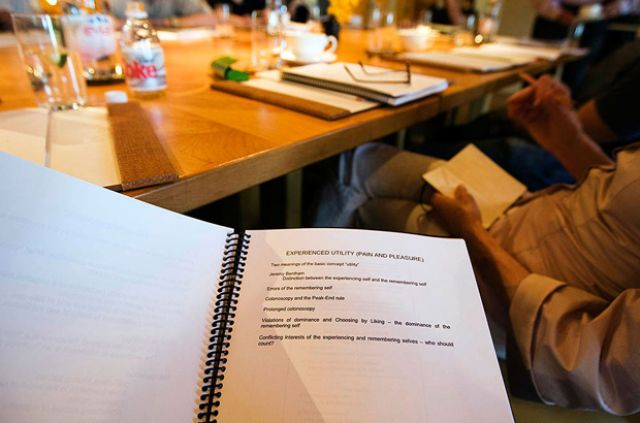

SESSION THREE

The word "utility" that was mentioned this morning has a very interesting history – and has had two very different meanings. As it was used by Jeremy Bentham, it was pain and pleasure—the sovereign masters that govern what we do and what we should do – that was one concept of utility. In economics in the twentieth century, and that's closely related to the idea of the rational agent model, the meaning of utility changed completely to become what people want. Utility is inferred from watching what people choose, and it's used to explain what they choose. Some columnist called it "wantability". It's a very different concept...

The word "utility" that was mentioned this morning has a very interesting history – and has had two very different meanings. As it was used by Jeremy Bentham, it was pain and pleasure—the sovereign masters that govern what we do and what we should do – that was one concept of utility. In economics in the twentieth century, and that's closely related to the idea of the rational agent model, the meaning of utility changed completely to become what people want. Utility is inferred from watching what people choose, and it's used to explain what they choose. Some columnist called it "wantability". It's a very different concept.

One of the things I did some 15 years ago was draw a distinction, which obviously needed drawing. between them just to give them names. So "decision utility" is the weight that you assign to something when you're choosing it, and "experience utility", which is what Bentham wanted, is the experience. Once you start doing that, a lot of additional things happen, because it turns out that experience utility can be defined in at least two very different ways. One way is when a dentist asks you, does it hurt? That's one question that's got to do with your experience of right now. But what about when the dentist asks you, Did it hurt? and he's asking about a past session. Or it can be Did you have a good vacation? You have experience utility, which is everything that happens moment by moment by moment, and you have remembered utility, which is how you score the experience once it's over.

And some fifteen years ago or so, I started studying whether people remembered correctly what had happened to them. It turned out that they don't. And I also began to study whether people can predict how well they will enjoy what will happen to them in future. I used to call that "predictive utility", but Dan Gilbert has given it a much better name; he calls it "affective forecasting". This predicts what your emotional reactions will be. It turns out people don't do that very well, either.

Just to give you a sense of how little people know, my first experiment with predictive utility asked whether people knew how their taste for ice cream would change. We ran an experiment at Berkeley when we arrived, and advertised that you would get paid to eat ice cream. We were not short of volunteers. People at the first session were asked to list their favorite ice cream and were asked to come back. In the first experimental session they were given a regular helping of their favorite ice cream, while listening to a piece of music—Canadian rock music—that I had actually chosen. That took about ten-fifteen minutes, and then they were asked to rate their experience.

Afterward, they were also told, because they had undertaken to do so, that they would be coming to the lab every day at the same hour for I think eight working days, and every day they would have the same ice cream, the same music, and rate it. And they were asked to predict their rating tomorrow and their rating on the last day.

It turns out that people can't do this. Most people get tired of the ice cream, but some of them get kind of addicted to the ice cream, and people do not know in advance which category they will belong to. The correlation between what the change that actually happened in their tastes and the change that they predicted was absolutely zero.

It turns out—this I think is now generally accepted—that people are not good at affective forecasting. We have no problem predicting whether we'll enjoy the soup we're going to have now if it's a familiar soup, but we are not good if it's an unfamiliar experience, or a frequently repeated familiar experience. Another trivial case: we ran an experiment with plain yogurt, which students at Berkeley really didn't like at all, we had them eat yogurt for eight days, and after eight days they kind of liked it. But they really had no idea that that was going to happen. ...

SESSION FOUR

Fifteen years ago when I was doing those experiments on colonoscopies and the cold pressure stuff, I was convinced that the experience itself is the only one that matters, and that people just make a mistake when they choose to expose themselves to more pain. I thought it was kind of obvious that people are making a mistake—particularly because when you show people the choice, they regret it—they would rather have less pain than more pain. That led me to the topic of well-being, which is the topic that I've been focusing on for more than ten years now...

Fifteen years ago when I was doing those experiments on colonoscopies and the cold pressure stuff, I was convinced that the experience itself is the only one that matters, and that people just make a mistake when they choose to expose themselves to more pain. I thought it was kind of obvious that people are making a mistake—particularly because when you show people the choice, they regret it—they would rather have less pain than more pain. That led me to the topic of well-being, which is the topic that I've been focusing on for more than ten years now. And the reason I got interested in that was that in the research on well-being, you can again ask, whose well-being do we care for? The remembering self?—and I'll call that the remembering-evaluating self; the one that keeps score on the narrative of our life—or the experiencing self? It turns out that you can distinguish between these two. Not surprisingly, essentially all the literature on well-being is about the remembering self.

Millions of people have been asked the question, how satisfied are you with your life? That is a question to the remembering self, and there is a fair amount that we know about the happiness or the well-being of the remembering self. But the distinction between the remembering self and the experiencing self suggests immediately that there is another way to ask about well-being, and that's the happiness of the experiencing self.

It turns out that there are techniques for doing that. And the technique—it's not at all my idea—is experience sampling. You may be familiar with that—people have a cell phone or something that vibrates several times a day at unpredictable intervals. Then they get questions on the screen that say, what are you doing? and there is a menu—and Who are you with? and there is a menu—and How do you feel about it ?—and there is a menu of feelings.

This comes as close as you can to dispensing with the remembering self. There is an issue of memory, but the span of memory is really seconds, and people take a few seconds to do that; it's quite efficient, then you can collect a fair amount of data. So some of what I'm going to talk about is the two pictures that you get, which are not exactly the same, when you look at what makes people satisfied with their life and makes them have a good time.

But first I thought I'd show you the basic puzzles of well-being. There is a line on the "Easterlin Paradox" that goes almost straight up, which is GDP per capita. The line that isn't going anywhere is the percentage of people who say they are very happy. And that's a remembering self-type of question. It's one big puzzle of the well-being research, and it has gotten worse in the last two weeks because there are now new data on international comparisons that makes the puzzle even more surprising.