Edge in the News

Artificial intelligence is playing strategy games, writing news articles, folding proteins, and teaching grandmasters new moves in Go. Some experts warn that as we make our systems more powerful, we’ll risk unprecedented dangers. Others argue that that day is centuries away, and predictions about it today are ridiculous. The American public, when surveyed, is nervous about automation, data privacy, and “critical AI system failures” that could end up killing people.

How do you grapple with a topic like that?

Two new books both take a similar approach. Possible Minds, edited by John Brockman and published last week by Penguin Press, asks 25 important thinkers — including Max Tegmark, Jaan Tallinn, Steven Pinker, and Stuart Russell — to each contribute a short essay on “ways of looking” at AI.

I was intrigued, fascinated, and alarmed in turn by the takes on AI from these researchers, many of whom have laid the very foundations of the field’s triumphs today...

...[T]here’s a lot to worry about — and get excited about and just mull — in “Possible Minds,” a collection of essays by thinkers on the cutting edge of technology and culture, edited by John Brockman, who has chronicled that world at Edge.org since 1996.

Mr. Brockman asks the 25 essayists to riff off a 1950 book, “The Human Use of Human Beings,” by pioneering tech thinker Norbert Wiener. That tome took a dim view of AI’s implications, warning that “The hour is very late, and the choice of good and evil knocks at our door.” Today’s essayists take a range of positions on whether that choice is still at hand, or has already been made, and if so, which option we’ve picked.

Our greatest living scientist constructs a history of the theory of evolution, linking together the work of six visionaries from Charles Darwin to Svante Pääbo by way of Motoo Kimura, Ursula Goodenough and Richard Dawkins. The outlier in the six is H.G. Wells, the novelist. According to Dyson, Wells was the first person to grasp the significance of “cultural evolution”, a process said here to be at least equal in importance to biological evolution. Cultural evolution consists of “changes in the life of our planet caused by the spread of ideas rather than by the spread of genes”

Mirrors today are so commonplace we barely give their origins a second thought.

In 1999, in a discussion on Edge.org, Bill Gates – founder of the Microsoftcorporation – and his peers mused over the greatest inventions of the past 2,000 years.

Tor Norretranders, the Danish science writer, nominated the humble mirror, which he equated with the advent of clothing, manners and behaviour, noting that it gave us the first notion of self-consciousness.

Can Technology Threaten Democracy? What are the real risk factors of war? Are we really losing the skill of manual skills or the new darkness? These questions and many others will answer today's leading scientists and thinkers in the fascinating book titled What Should We Be Worried About?

Brockman has managed to bring together a marvelous publication that gives an overview of what we can fear, or what we can learn from. Rather than claiming a patent on the truth, it is in the spirit of the objective message of science. At the very least, it is fascinating to read about things that disturb leading scientists and thinkers.

Do you believe in books finding you? This Idea Is Brilliant, a book edited by edge.org founder John Brockman, found me last week. I don’t know if somebody had gifted it, or did I buy and forget about it? But I am just grateful for it. Published in 2018, it keeps its promise of assembling “lost, overlooked, and under-appreciated scientific concepts everyone should know”.

Most of the concepts are narrated in small essays. But the one that stayed with me is a paragraph—yes, it is only one-paragraph long —by recording producer Brian Eno on ‘Confirmation Bias’. His observation resonated in the week of cricketer Hardik Pandya’s online inquisition or persecution, depending on what your own bias or belief is!

To ring in the New Year in the most depressing and hope-crushing way possible, Dyson sat down with Edge.org.

That's what we mean by understanding how our digitized world works. But the science historian George Dyson continues to look and looks for the digital to raise an analogous revolution. And that, he warns, could take the book out of his hands.

The year is still fresh and perhaps you’re on a road to living well and learning more. That may mean rethinking some reading and listening routines. I’ve listed below a few that you might want to check out — most of them focus on offering new ideas, different perspectives or alternative ways to look at some issue.

If you’re curious about new social or business trends, check these websites. Edge.org (https://www.edge.org/) is a collection of ideas from some of the world’s biggest thinkers. I wrote recently about “what is your question,” which stemmed from the site’s 2018 discussion.

[ED. NOTE: George Dyson, Kafka, Heidegger, Pirsig, Arendt, Wiener, and EDGE…]

George Dyson dedicates an interesting essay in Edge to explore digital evolution from a human system to an algorithm that no longer depends on human programmers, and the worrying implications of this phenomenon. But Dyson does not settle for the diagnosis and explores an original proposal for a solution: returning cybernetics to its analogue heart.

For Dyson, what we know today as a digital revolution has not ended, but it has mutated into something very different, abandoning the possibility of the first years and leaving behind its "childhood". For a long time, computer science has not responded to the old paradigm of machines controlled by instructions that, in turn, have been designed by humans, who supervise execution. . . .

"The search engine, initially an attempt to map human meaning, now defines human meaning. It controls, rather than simply catalogs or indexes, human thought..." [Continue reading George Dyson's "Childhood's End"]

Could 2019 be the year that these and other emergent technologies evolve from merely creepy to potentially totalitarian? In a New Year’s Day column published on Edge, a Web site devoted to discussions about science, technology, and philosophy, George Dyson, the science historian and author, argues that we’ve reached an inflection point. “Once it was simple: programmers wrote the instructions that were supplied to the machines,” Dyson writes. “Since the machines were controlled by these instructions, those who wrote the instructions controlled the machines.” Today, code itself has come alive: algorithms sift through our search histories, credit-card purchases, and geolocation to model our personalities and anticipate our desires. For this, a small number of people such as Mark Zuckerberg, Jeff Bezos, Sergey Brin, and Larry Page, have become unimaginably rich.

In the beginning of the essay, Dyson cites the novel Childhood’s End, written by Arthur C. Clarke in 1953, which tells the story of a peaceful alien invasion of Earth by mysterious “Overlords” who “bring many of the same conveniences now delivered by the Keepers of the Internet to Earth.” As Dyson points out, this story, much like our own story, “does not end well.”

Powerful short essay on the digital revolution. The map has become the territory. “We assume that a search engine company builds a model of human knowledge and allows us to query that model, or that some other company builds a model of road traffic and allows us to access that model. This fits our preconception that an army of programmers is still in control somewhere, but it is no longer the way the world works. The search engine is no longer a model of human knowledge, it is human knowledge. If enough drivers subscribe to a real-time map, traffic is controlled with no central model except the traffic itself. The social network is no longer a model of the social graph, it is the social graph” (1,250 words)

Over at EDGE.org, the must-read hub of intellectual inquiry and head-spinning science, Boing Boing pal and legendary book agent John Brockman is launching a new series of essays "from important third culture thinkers to address the empirically-driven and science related hot-button cultural issues of our time." First up is author George Dyson's "Childhood's End," a provocative riff on how the digital revolution has stripped much of our individual agency and that "to those seeking true intelligence, autonomy, and control among machines, the domain of analog computing, not digital computing, is the place to look."

February

2. Possible Minds: 25 Ways of Looking at AI, by John Brockman (editor)

At the highest level, debate about artificial intelligence often devolves into scenarios utopian or dystopian. Will machines make human beings the best they can be, or render them obsolete? Should we trust something potentially smarter than us? What is humanity's role in a world ruled by algorithms? Brockman, founder of the online salon Edge.org, corrals 25 big brains—ranging from Nobel Prize-winning physicist Frank Wilczek to roboticist extraordinaire Rodney Brooks—to opine on this exhilarating, terrifying future.

What is our concern?...This is the question that John Brockman has laid down to dozens of the most influential experts in the world on his Edge.org site (according to The Guardian, "the smartest web site in the world"). He asked them to confess what they were most concerned about, and to show them why the topics should be addressed....

Individual responses complement each other and consist of a multi-layered and ambiguous image of the contemporary world. Last but not least, the published texts, some of which have been printed in advance by the Respekt weekly, together, create a fascinating insight into what the leading scholars and thinkers are concerned about, what issues they are worried about or who, on the other hand, have ceased to worry.

Everything suggests that the new millennium has buried once and for all this paradigm of the two cultures that gave rise to so much debate throughout the twentieth century, and this in the name of a new humanism, even a return to question of the very principles of humanism. Many thinkers, including Francisco Fernández Buey (Para la tercera cultura, 2013) agree to observe in this reconfiguration of the epistemic order the advent of a third culture, an expression made famous by the literary agent John Brockman based on the postulates of CP Snow and which shows a reinvestment of the notion of reality, as well as the means implemented to apprehend it.

. . . The conference, The isomorphism of knowledge: projections of science in literature (twentieth-twenty-first centuries), proposes an interdisciplinary theoretical approach placing at the forefront the recent contributions of epistemocritique and the cognitive theory of figurative language and discourse in the perspective to establish, in a Hispanic and international framework, a dialogue between these two theoretical currents.

Once a year, John Brockman asks a question from leading scientists in a wide range of disciplines, whose multifaceted answers are intended to tell something about the current state of knowledge. "What do you think is the most interesting (scientific) news of our time, what is the significance of this news?" was last year's survey. A book that not only spreads future optimism.

Initiator of the "Third Culture"

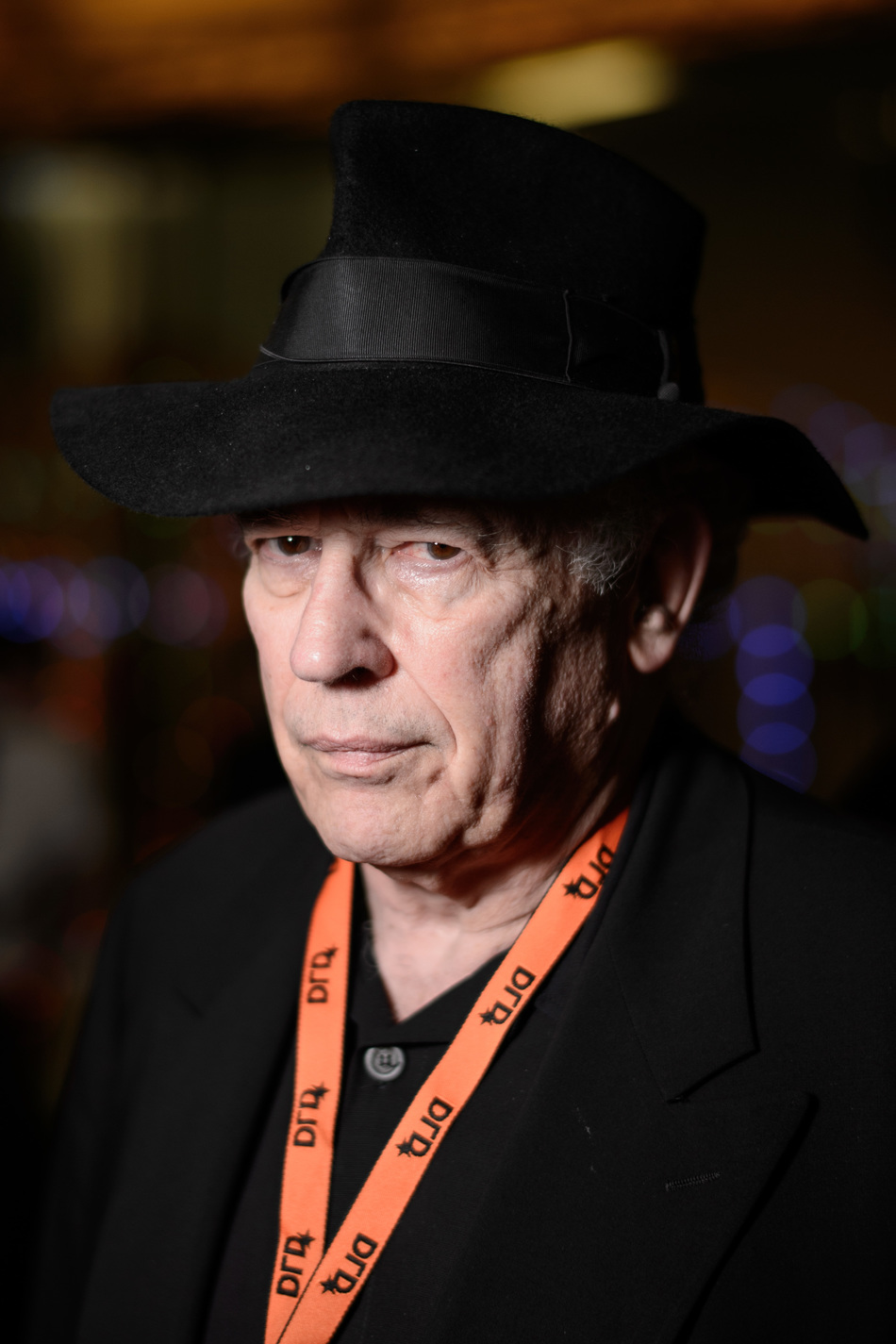

Mastermind who coined the term: John Brockman

Photo: Robert Schlesinger

On his homepage, US networker John Brockman lets the brightest minds of today think about the world of tomorrow.

Brockman runs side by side the site edge.org, and that is something like a wormhole into the future. Brockman acts there as curator of the future, as a correspondent of the morning. The man, who imagines himself in a think-tank with Goethe and Alan Turing, is modest enough, despite all certainty, not to report on what is coming. He prefers to ask questions, one each year, and he lets the brightest spirits in the world answer. . . .

In 1973 he founded his agency and mediated his friends as authors to publishers. The friends became stars, Brockman an impresario. Legendary are his annual meetings on his farm in Connecticut. On a weekend, the IQ elite comes to him and provides answers to these questions: What is the human? What is the brain? What is the free will? What is intelligence? Questions are the most important thing, says Brockman, they form the pillars of his thought-building, his empire. Questions are inherently transcendent, they want answers, they want future. And that is exactly what Brockmann is all about. . .

[Continue to English translation | Continue to original German]

The Edge of Science

In the podcast, I reference an important article [Steven Pinker] wrote titled "A Biological Understanding of Human Nature" from a collection of great essays by over 20 thinkers including Jared Diamond and Ray Kurzweil. This collection is found in a book by John Brockman called The New Humanists: Science at the Edge. Brockman, author and founder of the intellectual forum Edge.org, created many of the pieces in the book as narratives based on his conversations with these intellectuals and scientific minds....

In his contribution, Pinker addresses the biases and blind spots people have when it comes to discussing the roots of our behavior. We have much to learn from Steven Pinker and if you've never read him nor heard him speak, I highly recommend doing so....